Data Warehouse Design: Practical Guide

Organizations increasingly need to transform vast amounts of raw operational data into actionable strategic insights. A Data Warehouse (DWH) serves as the cornerstone of this transformation, acting as a centralized, integrated repository designed specifically for reporting and business analysis. Unlike operational databases that focus on real-time transactions, a data warehouse is characterized by its subject-oriented, integrated, non-volatile, and time-variant nature. By separating analytical workloads from day-to-day operations, it ensures high-performance querying without compromising system stability. Understanding the architecture and design of a data warehouse is essential for building a "single version of truth" that empowers decision-makers across the enterprise.

What is Data Warehouse

A data warehouse (DW or DWH), also known as an enterprise data warehouse (EDW), is a system used for reporting and data analysis, and is considered a core component of business intelligence. It serves as a central repository of integrated data from one or more disparate sources.

The most widely accepted definition was proposed by W.H. Inmon, often regarded as the father of data warehousing, and later elaborated in his book Building the Data Warehouse, where he characterizes a data warehouse through four distinct attributes:

- Subject-oriented: The data in the warehouse is organized so that all the data elements relating to the same real-world object or event are linked together. This contrasts with operational systems, which are organized around business processes (like sales or payroll).

- Integrated: Data is gathered from multiple sources and consolidated into a consistent format. This involves resolving naming conflicts, differing units of measure, and inconsistent data structures to ensure a "single version of truth."

- Non-volatile: Data in the warehouse is stable. Once data is entered, it is not changed or updated. This is critical for maintaining a reliable historical record for analysis, as operational data is often overwritten by the most recent transactions.

- Time-variant: Unlike operational systems that focus on the "now," a data warehouse maintains a long-term historical perspective (e.g., the last 5 to 10 years). Every record in the warehouse contains a time element to show when the data was accurate.

Physically, a data warehouse is a database that is kept separate from an organization's operational databases (such as those used for transaction processing). This separation is vital because analytical queries are often complex and resource-intensive; running them on a separate system ensures that business intelligence activities do not slow down the day-to-day transaction systems.

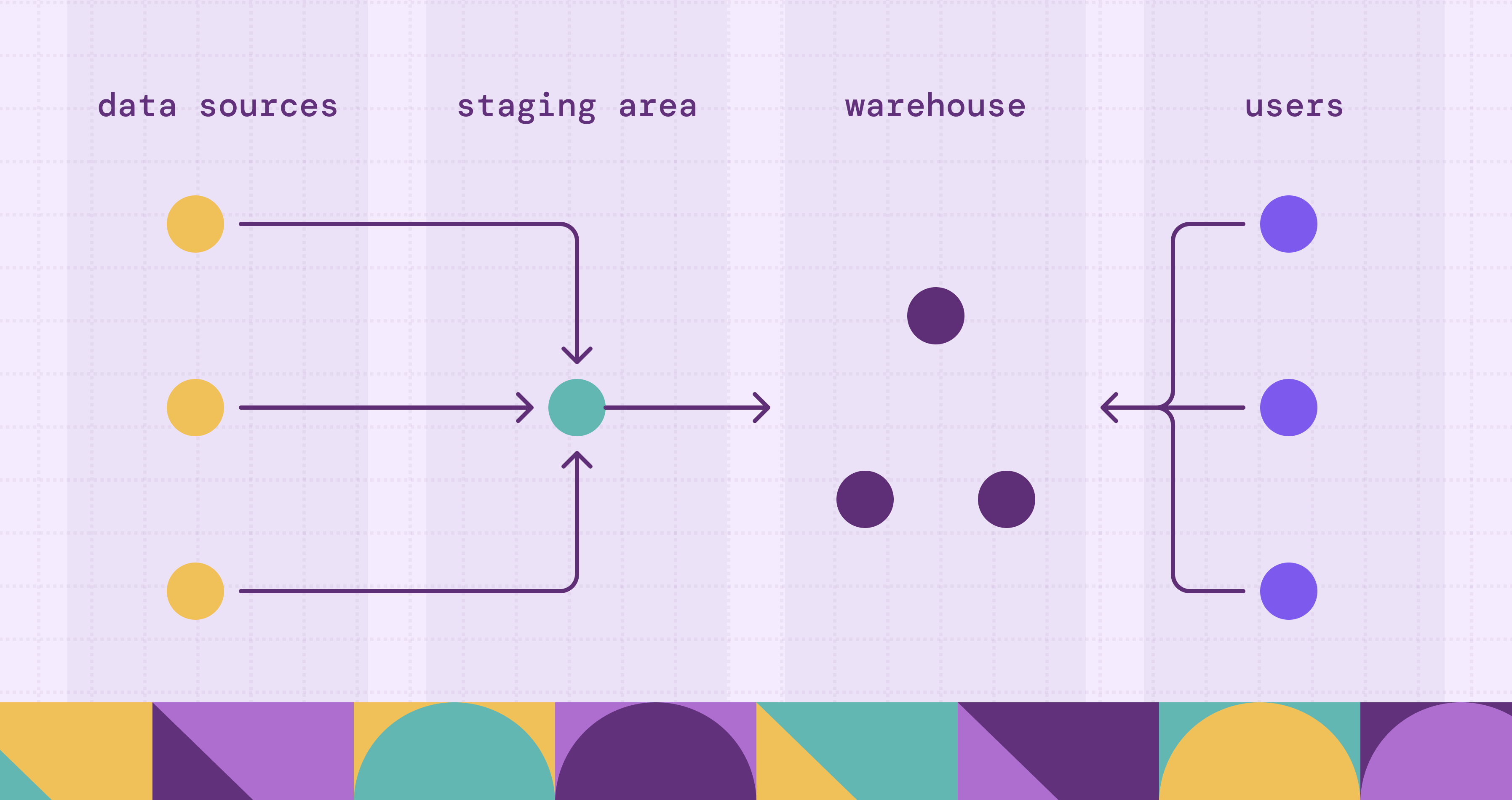

The data typically flows into the warehouse from various systems, such as Relational Databases, CRM, and ERP, and often passes through an intermediate "staging area." Here, the data undergoes ETL (Extract, Transform, Load) or ELT processes to ensure quality and consistency before being used for advanced data mining, online analytical processing (OLAP), and decision support.

What is Data Warehouse Design?

According to Inmon, data warehouse design is fundamentally different from classical application design. While traditional systems are process-oriented (designed to support specific business requirements and functions), data warehouse design is data-oriented. It focuses on the modeling of data and its integration across the entire enterprise.

The essence of design lies in shifting from a "functional silo" mindset to a "corporate-wide" perspective. Design is the process of defining how data from disparate operational environments, each with its own encoding, physical attributes, and naming conventions, can be transformed into a single, integrated, and non-volatile version of the truth. It is not merely about storage; it is about creating a stable architectural foundation that can support "Decision Support Systems" (DSS) by providing a historical record that remains consistent even as operational systems change.

Steps in Data Warehouse Design

Inmon emphasizes a "Top-Down" approach to design. Unlike the iterative, requirement-driven spiral of operational systems, the data warehouse is built by understanding the underlying data of the enterprise first. Following the methodology in Building the Data Warehouse, the design process involves these critical steps:

1. Building the Corporate Data Model The process begins at the highest level of the enterprise. You must identify the major "subject areas" of the corporation (e.g., Customer, Product, Transaction). This step involves defining the relationships between these entities without getting bogged down in technical details. This model acts as the "blueprint" for the entire warehouse.

2. Physical Design and Granularity Support The most important technical decision in Inmon's design is determining the level of granularity. Granularity refers to the level of detail or summarization held in the units of data. Design must account for high-level granularity (for performance) while maintaining low-level detailed data (for deep analysis). This step also involves deciding on the physical partitioning of data to ensure the system remains manageable as it grows to multiple terabytes.

3. Designing the Data Integration (ETL) Layer This is the "plumbing" of the design. Based on the data model, designers must map source systems to the warehouse. This includes:

- Transformation: Converting different codes (e.g., "M/F" vs "1/0" for gender) into a single corporate standard.

- Integration: Ensuring that when data comes from two different systems, it is unified into one record in the warehouse.

4. Creating the Time-Variant Structure Since a data warehouse is a collection of "snapshots" over time, the design must explicitly define how time is captured. This involves adding time elements (days, weeks, months) to every primary key in the warehouse, ensuring that the system can track changes in data values over years, not just current status.

5. Data Mapping and Loading The final design step is the movement of data. This involves identifying the "System of Record", the most reliable source for any piece of data, and designing the frequency and methods for moving that data from the operational environment into the integrated warehouse environment.

Types of Data Warehouse Architectures

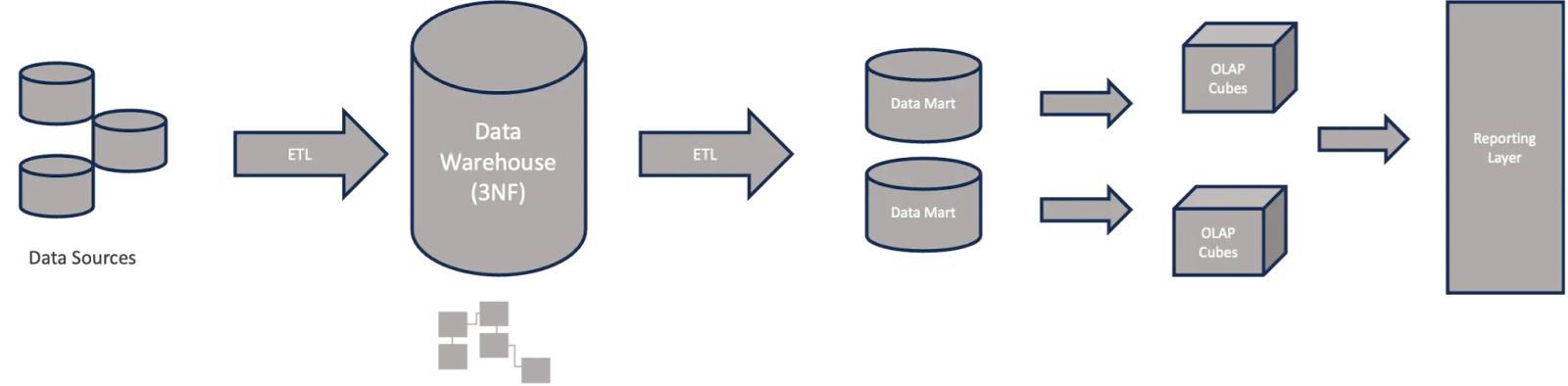

The architecture of a data warehouse determines how data is physically and logically organized to support analytical processing. Based on the principles established by Inmon and evolving industry standards, there are three primary architectural patterns:

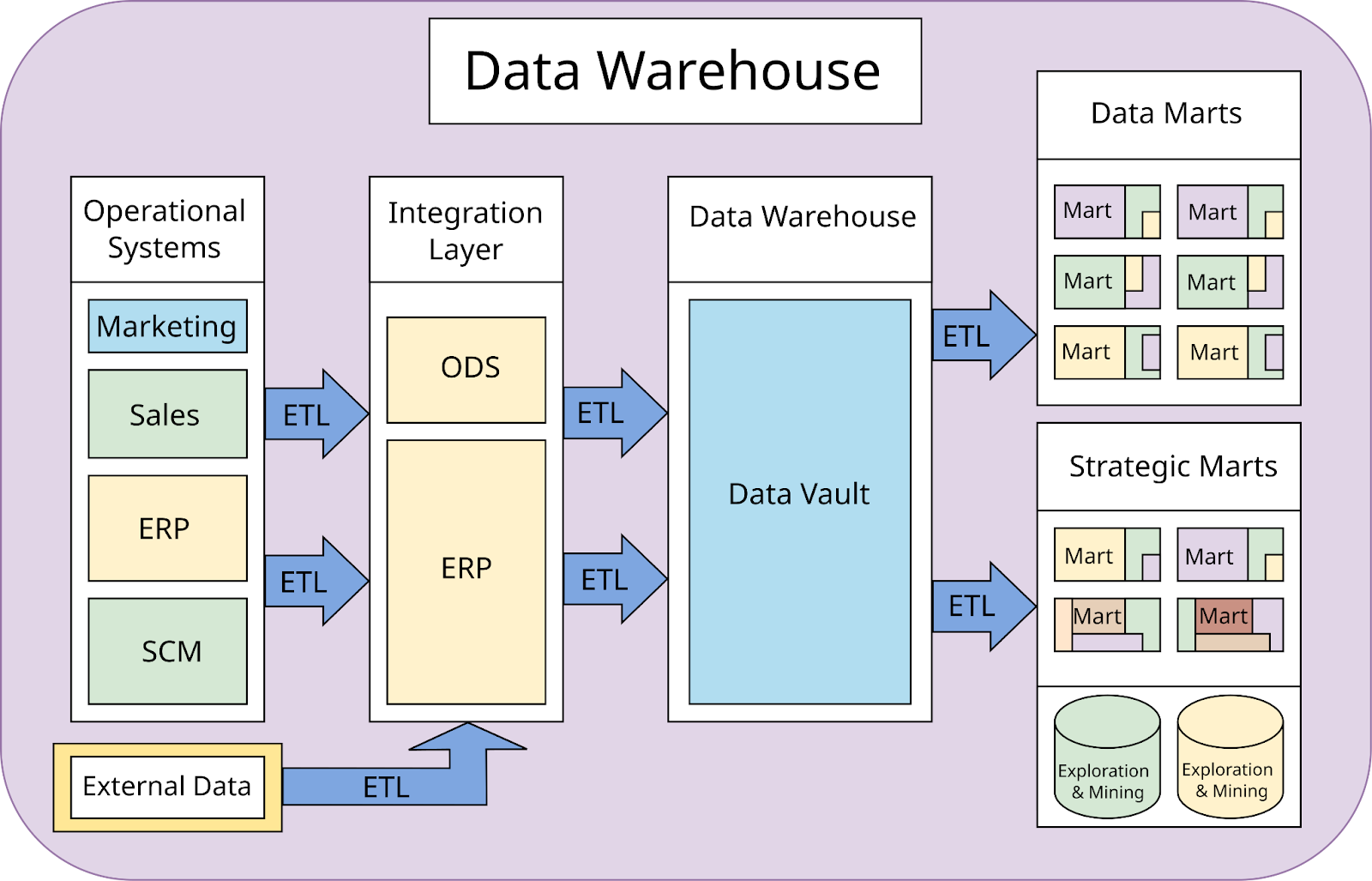

1. The Corporate Information Factory (Inmon’s Top-Down Architecture)

Commonly referred to as the "Hub-and-Spoke" architecture, this is the classic approach championed by Inmon. In this model, the Enterprise Data Warehouse (EDW) sits at the center as the "Single Version of Truth."

- How it works: Data is extracted from source systems, integrated, and loaded into a centralized EDW in Third Normal Form (3NF). From this central repository, smaller, subject-specific Data Marts are created for different departments (e.g., Marketing, Finance).

- Best for: Large organizations that require high data consistency and a robust, long-term foundation for enterprise-wide reporting.

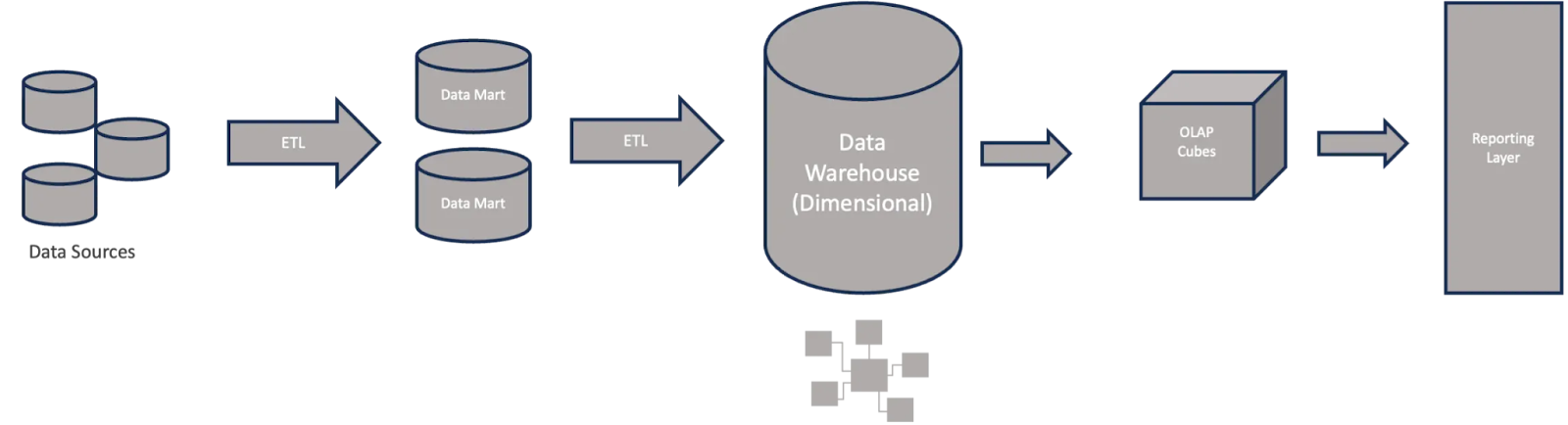

2. The Data Warehouse Bus Architecture (Kimball’s Bottom-Up Architecture)

Developed by Ralph Kimball, the principal author of the best-selling book The Data Warehouse Toolkit, this architecture focuses on speed and departmental needs while maintaining integration through shared standards.

- How it works: Instead of a centralized 3NF warehouse, this approach builds the warehouse as a collection of integrated Data Marts. Integration is achieved through "Conformed Dimensions" (standardized attributes like Date or Product ID that are used across all marts). Data is typically modeled using Star Schemas (Dimensional Modeling).

- Best for: Organizations needing faster implementation and those whose primary focus is on ease of use for end-user querying and OLAP.

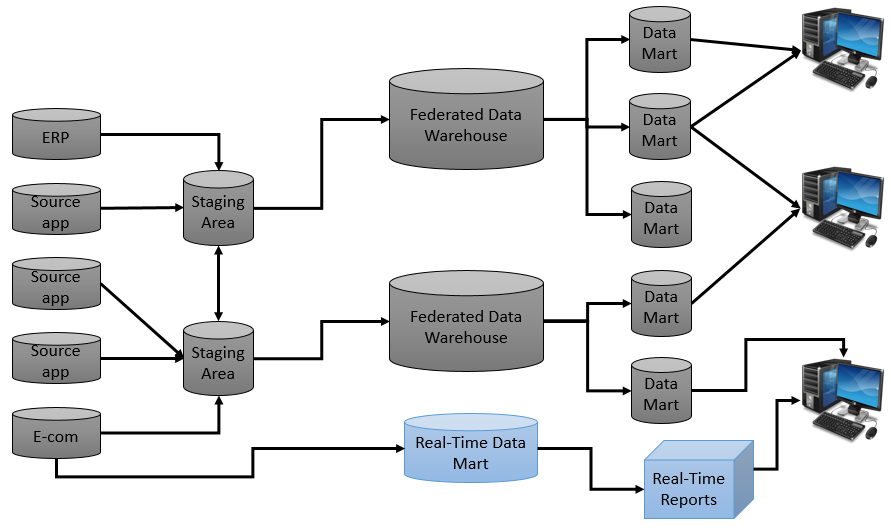

3. Federated Data Warehouse

A federated architecture is often a pragmatic solution for organizations that have acquired different systems through mergers and acquisitions.

- How it works: It leaves data in its existing distributed locations and uses a virtual or physical middleware layer to provide a unified view. It relies heavily on metadata to map disparate sources without necessarily moving all data into a single physical box.

- Best for: Situations where physical consolidation is technically or politically impossible, though it often faces performance challenges with complex queries.

4. Modern Cloud & Hybrid Architectures

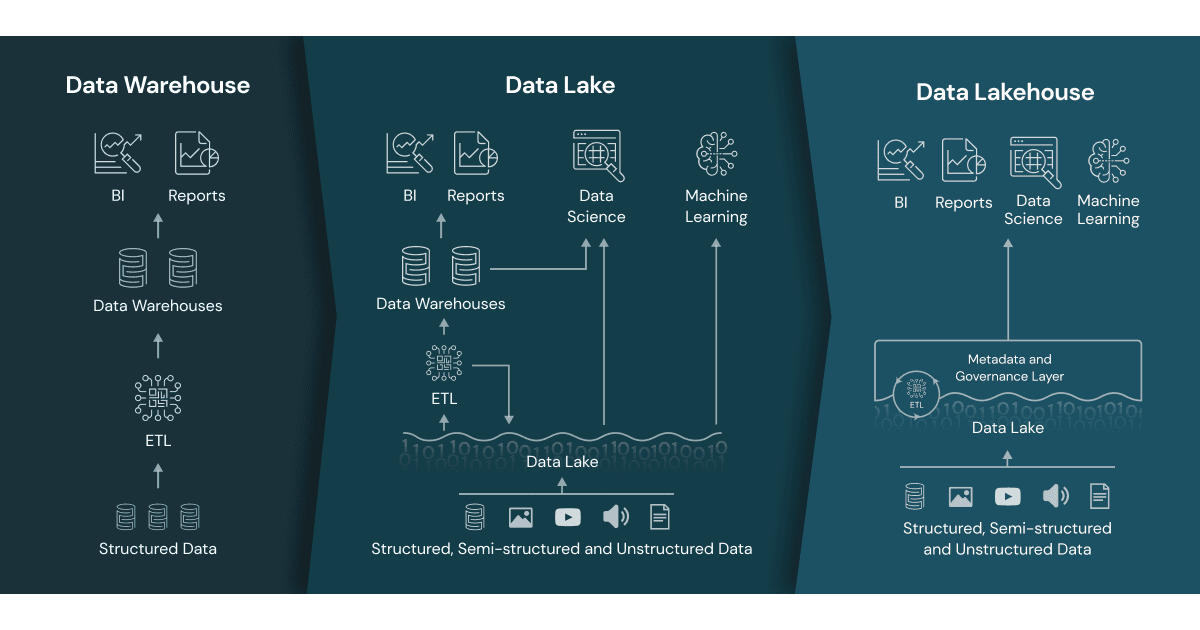

While the traditional Inmon, Kimball, and federated models provided the foundation for business intelligence, the explosion of "Big Data", characterized by high volume, variety, and velocity, necessitated a shift. Modern organizations have moved beyond rigid structures toward cloud-native and hybrid architectures.

The most significant evolution in this space is the Data Lakehouse, which bridges the gap between the structured, governed world of data warehouses and the flexible, low-cost world of data lakes.

The Rise of the Data Lakehouse

A Data Lakehouse implements data warehouse-like structures and data management features directly on top of the low-cost storage used for data lakes. It effectively provides a "best of both worlds" scenario: the reliability of Inmon/Kimball with the scale of modern cloud storage.

A Lakehouse architecture combines flexibility with strong data management by decoupling storage and compute for scalable, cost-efficient processing, storing data in open formats like Parquet or ORC for interoperability, and supporting ACID transactions via Delta Lake, Iceberg, or Hudi to ensure consistency. It handles structured, semi-structured, and unstructured data, and organizes it in layered Bronze–Silver–Gold stages to progressively refine raw data into business-ready datasets.

Conclusion

In conclusion, data warehouse design is a strategic endeavor that shifts the focus from functional silos to a unified corporate perspective. Whether adopting Inmon’s top-down "Hub-and-Spoke" model for maximum consistency or Kimball’s dimensional approach for agility, the ultimate goal remains the same: providing a reliable, historical foundation for Decision Support Systems (DSS). While traditional architectures like the Corporate Information Factory continue to provide robust governance, the rise of cloud-based Lakehouses and hybrid models demonstrates the field's ongoing evolution. Regardless of the specific technical framework, a successful data warehouse must prioritize data integration and quality, ensuring that the resulting insights are both accurate and enduring in a rapidly changing business environment.

Explore more with the forever-free PuppyGraph Developer Edition, or book a demo to see it in action.