What is Text to Graph?

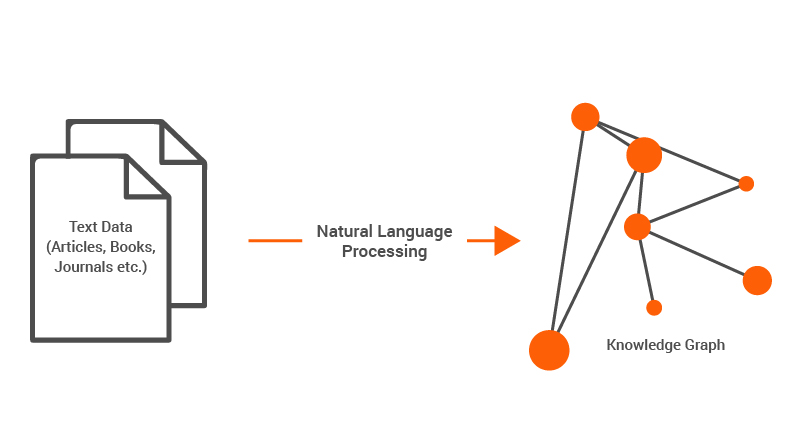

Most valuable information is locked inside unstructured text such as documents, emails, reports, and web pages. While humans can easily interpret these texts, machines struggle to extract relationships and meaning at scale. This gap has led to the emergence of text to graph techniques, which transform raw text into structured graph representations. By converting entities and their relationships into nodes and edges, text to graph enables powerful analysis, reasoning, and visualization.

With advances in natural language processing (NLP) and large language models (LLMs), this transformation is becoming more accurate and scalable than ever before. From knowledge graphs to AI-powered pipelines, text to graph is rapidly becoming a foundational technology across industries. This article explores what text to graph is, why it matters, how it works, and the architectures that power modern implementations.

What Is Text to Graph?

Text to graph is the process of converting text into a structured graph representation. In this graph, entities extracted from the text become nodes, while relationships between those entities become edges. The result is a machine-readable structure that preserves both meaning and context, enabling deeper analysis and reasoning.

For example, consider a sentence like: “Alice works at Acme Corp in Singapore.” A text to graph system would extract entities such as Alice, Acme Corp, and Singapore, and then identify relationships like works at and located in. These elements are then represented as a graph, allowing systems to query and analyze connections more efficiently than raw text.

At its core, text-to-graph bridges the gap between human-readable language and machine-interpretable structures. It transforms narrative content into structured representations, such as ontologies and knowledge graphs, that support graph algorithms and enable advanced reasoning beyond simple text analysis.

Why Convert Text Into Graphs?

The primary motivation for converting text into graphs lies in the limitations of unstructured data. While text is rich in meaning, it lacks explicit structure, making it difficult for machines to interpret relationships and dependencies. Graphs, on the other hand, are inherently designed to represent relationships, making them ideal for modeling complex systems.

One key advantage of graph representations is their ability to explicitly model relationships between entities. In a graph, the meaning of an entity is defined by its connections to other entities, enabling systems to reason over multi-hop relationships and discover hidden patterns within data.

Another important benefit is query flexibility. Graph databases enable expressive queries that can traverse relationships across multiple hops. For example, instead of searching for documents containing specific keywords, users can ask questions like “Who works with someone used to work at Acme Corp?” Such queries are difficult to answer using traditional text-based approaches.

Scalability is also a major factor. As data volumes grow, managing and analyzing relationships becomes increasingly complex. Graph-based systems are designed to handle large networks efficiently, making them well-suited for applications like social networks, supply chains, and knowledge graphs.

Finally, converting text into graphs enables integration across data sources. Structured graphs can unify information extracted from different documents, creating a coherent view of knowledge. This is particularly valuable in domains like healthcare, finance, and cybersecurity, where insights often depend on combining data from multiple sources.

How Text to Graph Works

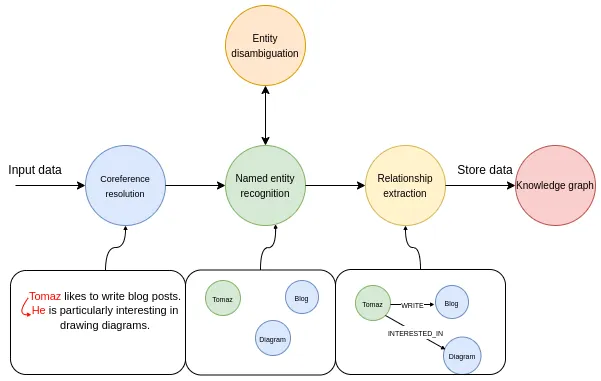

The process of converting text into a graph typically involves several stages, each designed to extract and structure information from raw text. While implementations may vary, most pipelines follow a similar sequence of steps.

The first step is text preprocessing. This involves cleaning the input data, removing noise, and preparing it for analysis. Techniques such as tokenization, sentence segmentation, and normalization are commonly used to ensure that the text can be processed effectively by downstream models.

Next comes entity recognition. In this stage, the system identifies key entities within the text, such as people, organizations, locations, and concepts. Named entity recognition (NER) models play a crucial role here, using machine learning techniques to detect and classify entities based on context.

Once entities are identified, the system extracts relationships between them. This is often referred to as relation extraction. The goal is to determine how entities are connected, such as “works at,” “located in,” or “owns.” This step may involve rule-based approaches, supervised learning models, or advanced neural networks.

After entities and relationships are extracted, the system constructs the graph. Nodes represent entities, while edges represent relationships. Additional attributes, such as timestamps or confidence scores, may also be included to enrich the graph.

Finally, the graph is stored in a graph database or similar system, where it can be queried and analyzed. This structured representation enables advanced operations such as pathfinding, clustering, and pattern detection, which are difficult to perform on raw text.

Key Components of a Text to Graph Pipeline

A robust text to graph pipeline consists of several interconnected components, each responsible for a specific aspect of the transformation process. Understanding these components is essential for designing scalable and accurate systems.

The first component is the ingestion layer, which handles the collection and preprocessing of text data. This layer ensures that input data is cleaned, normalized, and formatted correctly before being passed to downstream modules. It may also include mechanisms for handling large volumes of data, such as streaming or batch processing.

The second component is the NLP layer, which performs tasks such as entity recognition, part-of-speech tagging, and dependency parsing. This layer provides the linguistic foundation for extracting meaningful information from text. Advances in deep learning have significantly improved the accuracy of these tasks, enabling more reliable extraction.

The third component is the extraction layer, where entities and relationships are identified. This layer may use a combination of rule-based systems and machine learning models to capture both explicit and implicit relationships. It is often the most complex part of the pipeline, as it requires understanding context and semantics.

The fourth component is the graph construction layer, which transforms extracted information into a graph structure. This involves creating nodes and edges, assigning properties, and ensuring consistency across the graph. It may also include deduplication and entity resolution to avoid redundant nodes.

Finally, the storage and query layer enables users to interact with the graph. Graph databases provide efficient storage and support complex queries, allowing users to explore relationships and derive insights. This layer is critical for making the graph useful in real-world applications.

Text to Graph Using LLMs (AI)

The rise of large language models has significantly transformed the landscape of text to graph. With their remarkable flexibility in understanding and generating language, LLMs are particularly well-suited for extracting entities and relationships from complex text.

LLMs can perform tasks such as entity recognition and relation extraction in a single step, often with minimal training data. By leveraging their understanding of context and semantics, they can identify nuanced relationships that may be missed by traditional models. This is particularly valuable for domains with complex or specialized language.

Another advantage of LLMs is their ability to generate structured outputs. With the right prompting techniques, LLMs can produce graph representations directly, such as JSON or RDF triples. This simplifies the pipeline and reduces the need for intermediate processing steps.

However, LLM-based approaches also come with challenges. Ensuring consistency and accuracy can be difficult, especially when dealing with large datasets. Additionally, LLMs may produce hallucinated or incorrect relationships, requiring validation and post-processing.

Despite these limitations, LLMs are rapidly becoming a key component of modern text to graph systems. Their flexibility and scalability make them a powerful tool for transforming unstructured text into structured knowledge.

Text to Graph Architecture Patterns

Modern text to graph systems can be designed using several architectural patterns, each with its own strengths and trade-offs. Choosing the right architecture depends on factors such as data volume, complexity, and application requirements.

One common pattern is the pipeline architecture, where each stage of the transformation process is implemented as a separate component. This approach provides modularity and flexibility, allowing each component to be optimized independently. However, it may introduce latency and complexity, especially for real-time applications.

Another pattern is the end-to-end architecture, where a single model performs the entire transformation from text to graph. This approach is often used with LLMs, which can handle multiple tasks simultaneously. While this simplifies the system, it may reduce transparency and control over individual steps.

A hybrid architecture combines the strengths of both approaches. For example, an LLM may be used for initial extraction, while rule-based systems and validation layers ensure accuracy and consistency. This approach balances flexibility with reliability, making it suitable for production systems.

Conclusion

Text to graph has emerged as a powerful approach for unlocking the value hidden in unstructured text. By transforming narrative content into structured representations such as knowledge graphs and ontologies, it enables machines to understand relationships, context, and meaning at scale. This shift allows organizations to move beyond simple keyword-based analysis toward deeper reasoning, more flexible querying, and richer insights across diverse data sources.

As advances in NLP and large language models continue to improve extraction accuracy and scalability, text to graph is becoming increasingly practical for real-world applications. While challenges such as consistency and validation remain, modern architectures, especially hybrid approaches, offer effective ways to balance flexibility and reliability. Going forward, text to graph will play a foundational role in building intelligent, data-driven systems across industries.

Explore more with the forever-free PuppyGraph Developer Edition, or book a demo to see it in action.